The more, the better, right? Buying a new monitor shouldn’t be that big of a decision, but you should consider certain aspects before making your final choice. The big dilemma of this day and age is choosing between the 2560×1440 and 1920×1080 resolutions. Even if you have no IT knowledge, you can probably guess that these numbers do with the monitor’s quality and image size. It’s obvious to see that the first set of numbers is larger than the second one, but we’ll still try to break down these two resolutions and see what they’re all about.

In this article, we’ll first explain some terms you should know about and see how these two resolutions match up in those terms. After that, we’ll try to simplify your purchasing decision by listing several scenarios where you can find yourselves and offering our recommendation for each one.

Basic info about 2560 x 1440

The 2560×1440 resolution is often referred to as QHD (Quad HD) or WQHD (Wide Quad HD) or 1440p, whereas the 1920×1080 resolution is called Full HD or 1080p. These are the most common resolutions in modern monitors, so let’s break down their most important characteristics!

Screen resolution

First of all, you should know what do these numbers stand for. They stand for the screen/display resolution, and it represents how many pixels can be displayed simultaneously on the screen. The more pixels an image contains, the more precise the image’s details can be observed. For instance, check out how various classic games evolved as more and more pixels have enabled developers to represent very complex models:

The screen’s resolution is usually defined as where width and height represent the number of columns and rows (respectively) of pixels used to form the image. It can be observed that an image is just a matrix of differently colored pixels.

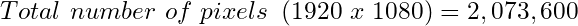

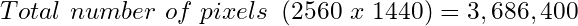

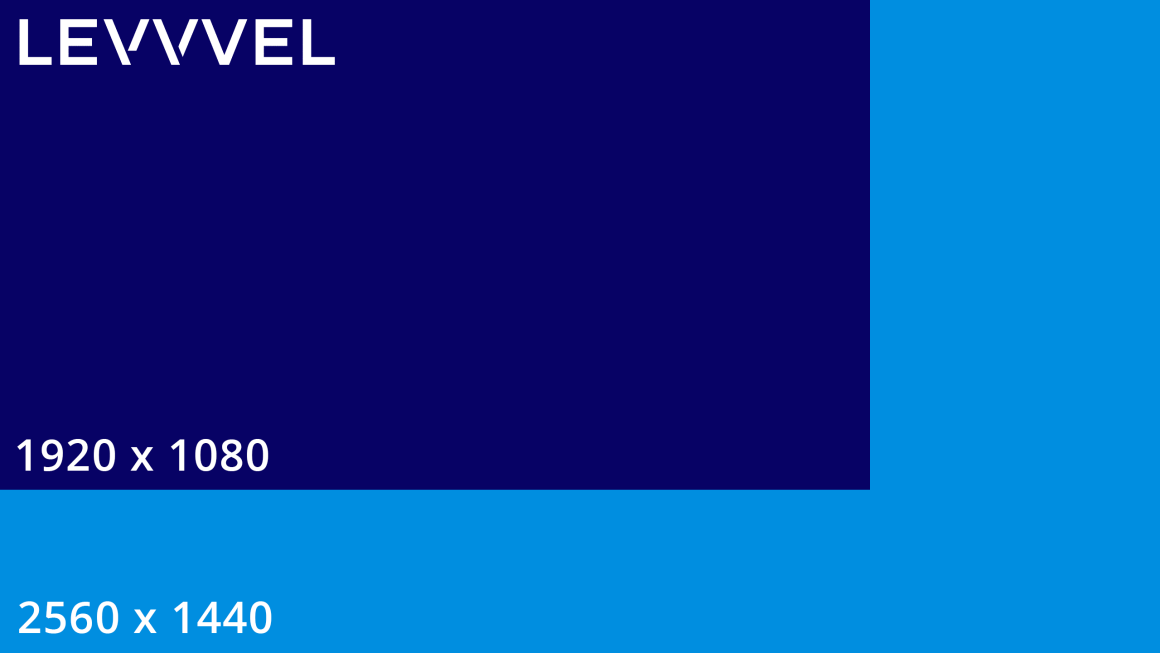

It’s obvious to see that the 2560×1440 resolution is better than 1920×1080 simply because it’s larger. Even though the increase in width and height is 33%, the real difference is in the number of pixels available on the screen. You can obtain this number simply by multiplying the screen’s width and height in pixels:

The latter has around 1.6 million pixels more than the former, representing a 77.78% increase in pixel number.

Aspect ratios

The aspect ratio of a screen is, by definition, the ratio between the screen’s width and height. Technically, it can be expressed as a single number you get by dividing the screen’s width by its height:

but it’s commonly represented by two numbers separated by a colon. Monitors and TVs usually have either the 4:3 or the 16:9 aspect ratio. You can derive the screen’s aspect ratio by dividing its resolution with the most significant common factor of its height and width in pixels.

For our resolutions, the answer is 16:9 in both cases. So, if your current monitor’s aspect ratio is also 16:9, you should have no problems adjusting to either of these resolutions!

Two fundamental aspects of understanding in monitors are resolution and pixel density. The resolution of a monitor dictates how many pixels there are and at what ratio (width to height). For example, for the 2560 x 1440 resolution, the number 2560 is the width, while 1440 is the height. All resolutions are formatted like this with the width first and height second. Some simple math helps to understand how much of an improvement a jump from 1920 x 1080 to 2560 x 1440 would be.

Pixel density

The screen resolution doesn’t tell us the whole story as it says nothing about the screen’s physical dimensions. Having more pixels is excellent but, what is a pixel, and how big is it? Well, it depends on the screen. Pixel density carries the information on how densely pixels are placed on the screen, or, de facto, how big is an individual pixel. For instance, a large screen with a small pixel density will look pixelated, which means you can see an individual pixel as a colored square (red, green, or blue).

Large screens need more pixels to preserve their quality, and smaller screens need less. That’s why a better parameter to consider when judging the display’s quality is pixel density, which is (sort of) the ratio between the number of pixels on a screen and its size.

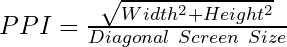

More precisely, it’s calculated as:

where PPI stands for pixels-per-inch. Here are some PPI calculations for some monitor sizes:

| Resolution | Total Pixels | 24″ Monitor | 27″ Monitor | 27″ Monitor |

|---|---|---|---|---|

| 1920 x 1080 | 2,073,600 | 92 PPI | 82 PPI | 70 PPI |

| 2560 x 1440 | 3,686,400 | 122 PPI | 109 PPI | 93 PPI |

This is an essential factor for users looking to purchase a large monitor. The larger the monitor, the more critical its resolution becomes to preserve the same pixel density. Many users aim at a 90-110 PPI pixel density as a golden standard. So, if your monitor size is up to and including 24”, you can settle with the 1920×1080 resolution. However, with larger monitor sizes, the 2560×1440 resolution stands out and should be used instead.

Theoretically, the higher the pixel density, the better. However, the human eye is a limiter in these cases and it has been reported the human eye can observe a maximum of around 300 PPI from a distance of 2.5 ft. Also, since most content is still created for the PPI of about 90-100 PPI, many things might appear quite small on your high-PPI screen. That’s why the 90-110 PPI pixel density is the sweet spot you should aim for, instead of going all in and overkilling it.

What difference does it make?

Different people are buying monitors for various purposes. What you do on your computer every day pretty much determines whether you’ll benefit from a higher resolution or not. Higher is almost always better, but an important question is: is it worth it? Will you be able to exploit all advantages of any resolution? Let’s check out several scenarios where you might find yourself when making your monitor purchase!

Can you tell the difference between 1440p and 1080p?

This brings us to another important point: will you notice the difference? After all, if you can’t, then why bother sinking your money into a higher resolution monitor. This will be primarily decided by your viewing distance. In other words, how far you sit from your monitor will play a big role in how significant the difference between the resolutions is.

Everybody’s table size and sitting preference vary, but generally, if you’re sitting less than two feet away, the difference should be clear. Regardless of what you’re using your computer for, you notice the difference at a distance.

Light browsing? Yes, you’ll notice. Playing a game like CS:GO and sit very close to monitor? Yes, you’ll notice.

The difference in everyday use

Even though large resolutions and monitors are commonly associated with gaming, many other users may benefit from large displays. Many users feel the need to open multiple windows next to each other for multitasking in everyday use. Suppose you, for instance, decide to pair the 2560×1440 resolution with a 27-inch monitor. In that case, you’ll quickly notice the advantage as you can comfortably view content from two browser tabs simultaneously, like watching an online tutorial on YouTube and following it in the second browser tab.

However, if you’re viewing low-quality content on a 2560×1440 screen, you’ll quickly notice how the full-screen experience is quite challenging to watch. Watching 720p movies or videos in fullscreen mode is painful on a 1440p screen, but it’s still quite pleasant on a 1080p screen. That being said, switching to 1440p in this day and age for everyday use might be too early, as the online content is yet to start the full transition to better qualities. This is also limited by connection speeds and old devices that use communication interfaces that don’t even support these resolutions.

The difference in professional use

Professionals who work primarily on their computers (working from home, freelancing) might greatly benefit from larger resolutions and monitors. Seeing more is better in these cases, and this can apply to many different careers. Also, when your work requires you to look at your computer all day, looking at poor monitors can become tiring.

For instance, developers can now see a more significant portion of their code simultaneously, saving some time when debugging or trying to locate a particular line of code. Also, if you are a web/app developer, you can divide your screen into two parts: one for coding and one for seeing the results in a whole new window.

Also, people who work with digital art, video editing, or computer-aided design (CAD) will be thrilled to see their work on a larger, more detailed screen where they can spot details more easily and add to the overall quality of their work.

Professional use, unlike everyday use, is all about large screens and having as many pixels as possible on your screen. Investing in a good monitor with a higher resolution can make your purchase a worthy investment that will translate into your career and make a notable difference, apart from being eye candy.

However, there is a vital alternative to buying a single 1440p display: buying two 1080p displays! Seriously, it’s cheaper, and it helps you bring more actual, physical space into your working setup. While 1440p displays bring 77.78% more pixels, they don’t get that much more space, and working with two monitors can be more useful as they can be used independently, each one with its desktop and open windows. We’ll leave you to think about this!

The difference in gaming

Finally, we reach the most talked-about aspect of purchasing a new monitor for most users. The number one question everyone is asking is whether they’ll see a noticeable difference in their gaming experience. Monitors with higher resolutions are noticeably more expensive, and no one wants to invest in something they cannot see, and that doesn’t influence their gaming in any way.

Well, the most common answer to such questions is that the difference is there. People who started gaming on the 1440p resolution simply haven’t looked back, and they’ve even mentioned how going back to 1080p hurt their eyes. So, should you go for it if you have the required budget?

Well, not before consulting your graphics card! Playing on a higher resolution forces your graphics card to render more significant amounts of data, and it can take a big chunk on its performance. If it can’t keep up, your framerate will likely drop and ruin your gaming experience! You should always consult online benchmarks or forums to see whether your current setup will be able to handle playing with a 1440p resolution with a steady 60 FPS (frames per second) framerate, or even 144 FPS if you want one with a 144 Hz refresh rate.

For example, testing Battlefield 1 on a gaming rig equipped with a GTX 1070 (roughly equal to an RX Vega 56) you’re looking at an average FPS drop from around 115 to 85. That’s around a 30 FPS decrease. Nothing to scoff at. So, if you’re thinking about upgrading, be sure to budget in a new graphics card if necessary.

If you have any doubts about your current setup, you should stick with 1080p and enjoy the game’s smoothness without risking large amounts of money on a monitor with a resolution which ruins your gaming. Still, you can reduce the game’s resolution from in-game settings and use 1080p with a 1440p monitor, but that would probably nullify the main reason why you bought a 1440p monitor in the first place!

Bottom line

Objective thoughts

It’s all about the context. We love greeting new technologies, including larger screens, and if your budget allows, you should go for it. However, try to strike that perfect ratio or pixel density (90-110 PPI) and make sure you fully utilize the high resolution. If your graphics card can’t handle it or if you’re just a casual computer user, you should save your money for something else!

Our opinion

Going from a 24-inch monitor with a 1080p resolution to one with a 1440p resolution, can you even appreciate the PPI difference at that same size?

Our opinion?

Absolutely.

You’re looking at a jump from 92 PPI to 122 PPI. That’s a considerably bigger jump than to a 109 PPI density with a 1440p 27-inch monitor. Give a 1440p monitor a chance for a few days and go back to your 1080p monitor. You’ll ask yourself how you could even put up with it. Looking at pictures or Youtube videos of the monitor you’re interested in won’t show their true image quality or the difference between 2560 x 1440 vs. 1920 x 1080. Aside from ordering the monitor, you’re eyeing (I couldn’t help it), the next best thing to do is visit your local electronics store and see how it looks in person.

Should you get a 2560 x 1440 monitor? Yes, but with a few things to keep in mind.

Since the PPI is higher, you will notice a more enormous difference with a 24-inch monitor at 1440p than a 27-inch monitor. You will see an improvement on a 27-inch monitor also, though, but not as much.

First, decide whether a larger screen size or better-detailed image quality is more important to you. Keep in mind regardless of which size you choose, you are still increasing your resolution.

Second, your graphics card will be processing the same increase in the number of pixels. So you will have a few scenarios:

- You have a very good graphics card: take the frame rate hit and carry on as you did before.

- You have a decent graphics card: lower your in-game graphics settings.

- You have an older or worse off graphics card: it won’t be able to keep up and you will need to upgrade your graphics card.

Ultimately it will all depend on your size vs. PPI preference, graphics card situation, and budget.